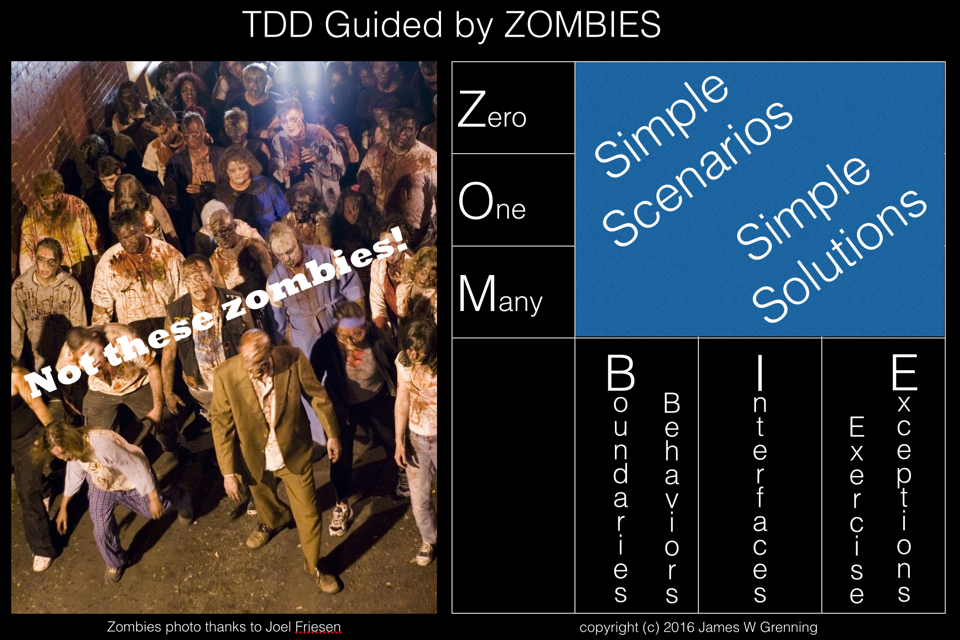

When I first used TDD I read James Grenning’s book Test Driven Development for Embedded C. In this book James proposed following a pattern for developing tests to test for zero, then one and then many (ZOM). Recently he has developed this idea further into ZOMBIE testing. Z – Zero O – One M – Many...

Tag: TDD

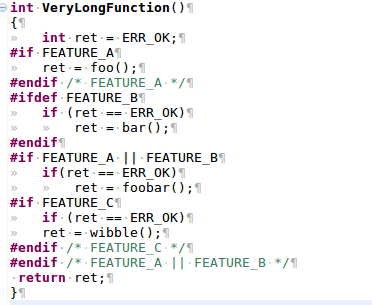

Refactoring C to Remove Feature Flags

You’ve read the books on Refactoring, on working with legacy code, on Unit Testing and on TDD. Then you look at the codebase you’ve inherited, it’s written in C, and it’s riddled with conditional compilation. Where do you start? In years gone by feature flags were widely used in embedded systems as a means of having...

Developing the CODESYS runtime with TDD

I was recently working in the CODESYS runtime again, developing some components for a client and I thought the experience wold make the basis of a good post on bringing legacy code into a test environment, to enable Test Driven Development (TDD)The CODESYS runtime is a component based system, and for most device manufacturers is...

Using Google Test with CDT in eclipse

Introduction I like to use test driven development, currently my preferred framework is googletest. When I came to use eclipse CDT with the MinGW toolchain I found I had lots of little issues to get over to achieve what I wanted, namely a rapid TDD environment that I could also build from the command line...