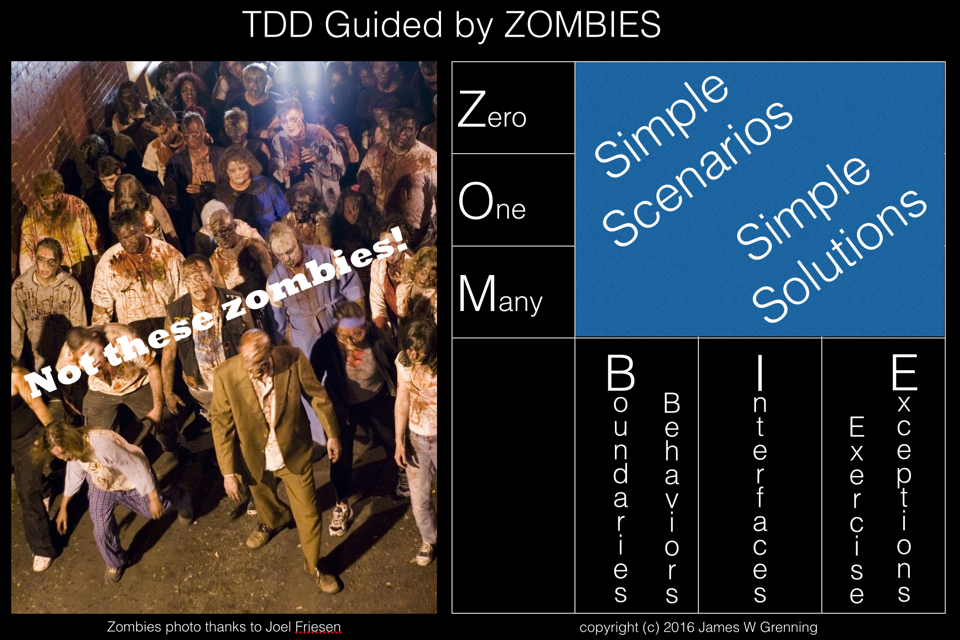

When I first used TDD I read James Grenning’s book Test Driven Development for Embedded C. In this book James proposed following a pattern for developing tests to test for zero, then one and then many (ZOM). Recently he has developed this idea further into ZOMBIE testing. Z – Zero O – One M – Many...

Author: David

The Pragmatic Programmer

I think I originally read The Pragmatic Programmer by Andrew Hunt and David Thomas a good ten or fifteen years ago. I’ve just taken a couple of days while between contracts to re-read the book. I was very pleased to find that the book is just as fresh as I remember, 70 great pragmatic tips to help...

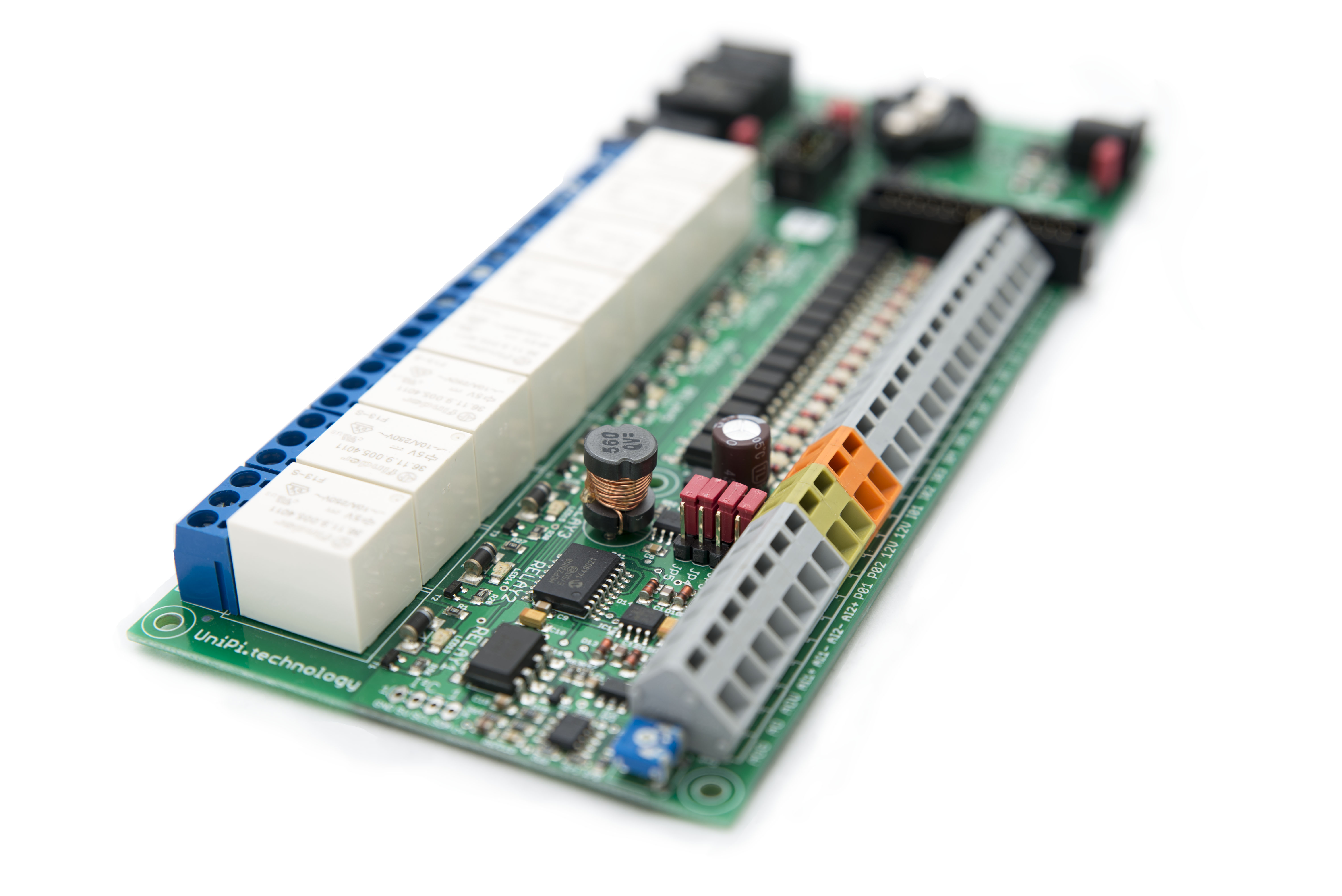

CODESYS for UniPi 1.0.2.0

CODESYS for UniPi 1.0.2.0 is available for download from the CODESYS store.Changes:Addition of support for digital inputs I13 and I14 using a custom cable. Enhanced support for one wire expansion modules giving better behaviour on comms or power failure to the module.

Learning Python

Python has never been a language I have had to know well. I’ve adapted existing scripts, I’ve created a few simple scripts from scratch. But I haven’t learnt it properly, just the parts I’ve needed. I decided it was about time I learnt the language properly. A friend recommended that I take a look at python koans....

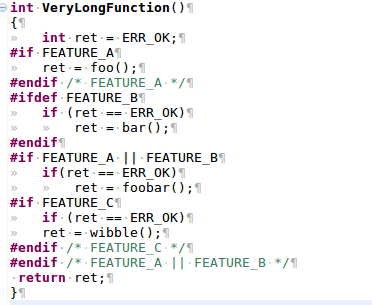

Refactoring C to Remove Feature Flags

You’ve read the books on Refactoring, on working with legacy code, on Unit Testing and on TDD. Then you look at the codebase you’ve inherited, it’s written in C, and it’s riddled with conditional compilation. Where do you start? In years gone by feature flags were widely used in embedded systems as a means of having...

Developing the CODESYS runtime with TDD

I was recently working in the CODESYS runtime again, developing some components for a client and I thought the experience wold make the basis of a good post on bringing legacy code into a test environment, to enable Test Driven Development (TDD)The CODESYS runtime is a component based system, and for most device manufacturers is...

CODESYS for UniPi

Today I have released CODESYS for UniPi for free download from the CODESYS store. This package adds a comprehensive set of drivers to CODESYS for Raspberry Pi allowing full use of the features of the UniPi Extension board from within CODESYS.

Getting Started with Yocto on the Raspberry Pi

I've been wanting to have a play with Yocto so decided to have a go at getting an image running on a Raspberry Pi. I found plenty of references but no step by step that just worked. This post just covers my notes on how to get going. The Yocto Project Quick Start states "In...

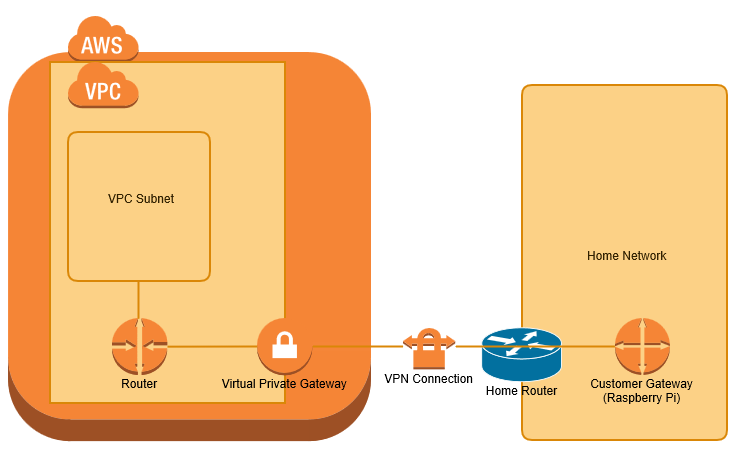

VPN bridge from home network to AWS VPC with Raspberry Pi

I wanted to extend my home network to a Virtual Private Cloud (VPC) within Amazon Web Services (AWS), primarily for use as a jenkins build farm. I have achieved this using a Raspberry Pi as my Customer Gateway device. This post covers the process of configuring the raspberry pi from scratch and AWS from scratch. I'm...

Install Raspberry Pi img using OSX

Up to now I have used win32Diskimager.exe in a windows VM to program images onto SDCards for the Raspberry Pi. For some reason having not done this for a while it has stopped working for me, so I decided to program using OSX directly. Downloading Firstly I downloaded and unzipped the raspbian image in my...