Today I have released CODESYS for UniPi for free download from the CODESYS store. This package adds a comprehensive set of drivers to CODESYS for Raspberry Pi allowing full use of the features of the UniPi Extension board from within CODESYS.

Tag: RaspberryPi

Getting Started with Yocto on the Raspberry Pi

I've been wanting to have a play with Yocto so decided to have a go at getting an image running on a Raspberry Pi. I found plenty of references but no step by step that just worked. This post just covers my notes on how to get going. The Yocto Project Quick Start states "In...

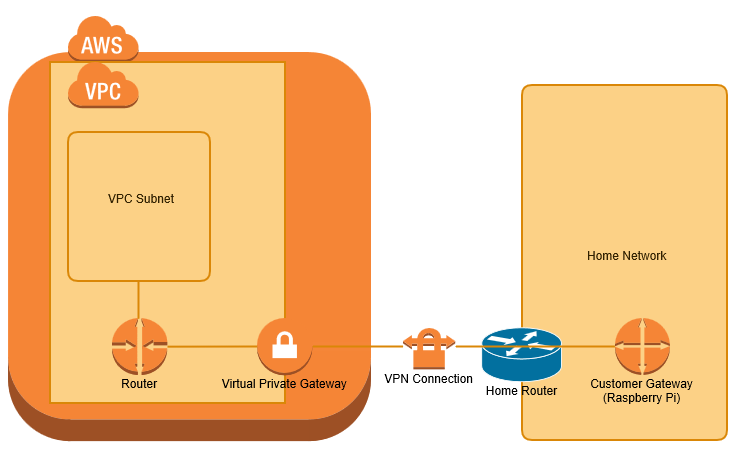

VPN bridge from home network to AWS VPC with Raspberry Pi

I wanted to extend my home network to a Virtual Private Cloud (VPC) within Amazon Web Services (AWS), primarily for use as a jenkins build farm. I have achieved this using a Raspberry Pi as my Customer Gateway device. This post covers the process of configuring the raspberry pi from scratch and AWS from scratch. I'm...

Install Raspberry Pi img using OSX

Up to now I have used win32Diskimager.exe in a windows VM to program images onto SDCards for the Raspberry Pi. For some reason having not done this for a while it has stopped working for me, so I decided to program using OSX directly. Downloading Firstly I downloaded and unzipped the raspbian image in my...

Starting with CODESYS on the Raspberry Pi – Update

Last May I blogged about Starting with CODESYS on the Raspberry Pi. Since then I have found it a great teaching platform to help others learn CODESYS. My getting started tutorial is now a little out of date, so I thought I would reproduce it using current versions of software. This blog walks through the...

Cross development using eclipse and GCC for the RPi

Introduction When I decided to use eclipse to do some cross development for the Raspberry Pi hosted on windows I was surprised to find that I found lots of useful posts elsewhere that gave a part of the solution, or worked well on a Linux host. I found both of the sites below to be...

Backup and Restore Raspberry Pi to Synology DiskStation

Introduction There are many articles on how to backup Raspery Pi systems, up to now I have relied on taking card images and storing these on a machine that is backed up. However it is all to easy to have a few hours of fun with the Raspberry Pi and then not get round to...

Using PiFace Control and Display with CODESYS

This post is intended for CODESYS beginners, it aims to give a step by step guide to creating a CODESYS project for the Raspberry Pi with the PiFace Control and Display add on board. (I am actually using PiFace Control and Display 2, but it appears to be compatible). Start by creating a new standard...

Configuring a Jenkins Slave to build VS2013 projects

Introduction I have previously installed Jenkins, SVN and Trac on a single Raspberry Pi 2, with the intention of having a home Continuous Integration server (CI). I now want to get a visual studio 2013 solution built by Jenkins. As a first project I have decided to get googletest (gtest) to build. As a precursor...

Integrating trac and Jenkins

In this post I cover how I have integrated Trac and Jenkins using HudsonTracPlugin and Jenkins Trac Plugin. I am running both Trac and Jenkins on a Raspberry Pi 2. Trac Plugin The Trac Plugin is a plugin for Jenkins that creates links from Jenkins projects to Trac instances. As an example, if you committed a change with the following...